I’m more a database person than a spreadsheet person. I’m more used to using SQL to bend data to my will than all the fancy gubbins that you’ll find in Excel. With some chunky (for a spreadsheet) ad hoc data in hand I set about connecting it up to SQL Server so I could run a few choice SELECT statements on the data.

The details in this post work with 64bit editions of Excel 2010 and SQL Server 2008 R2

The basic ad hoc connection looks something like this:

SELECT *

FROM OPENDATASOURCE( 'Microsoft.ACE.OLEDB.12.0', 'Data Source="<full file path to excel file>"; Extended properties=Excel 12.0')...[<data sheet name>$]

However, if you try that in SQL Server Management Studio on a raw SQL Server installation you’ll get this error message:

Msg 15281, Level 16, State 1, Line 7

SQL Server blocked access to STATEMENT 'OpenRowset/OpenDatasource' of component 'Ad Hoc Distributed Queries' because this component is turned off as part of the security configuration for this server. A system administrator can enable the use of 'Ad Hoc Distributed Queries' by using sp_configure. For more information about enabling 'Ad Hoc Distributed Queries', see "Surface Area Configuration" in SQL Server Books Online.

Enabling Ad Hoc Remote Queries

I’ve linked to the Books On-Line entry in the above, but it is only part of the story. Once you’ve followed its instructions on opening the View Facets dialog, you have to hunt around a little to find where you turn on and off the ad hoc remote queries. To save you the time, they’re in the “Server Configuration” facet.

The alternative, also mentioned, is to issue a SQL Command. This command:

sp_configure 'Ad Hoc Distributed Queries', 1;

However, that still won’t work directly. You’ll get the following error message:

Msg 15123, Level 16, State 1, Procedure sp_configure, Line 51

The configuration option 'Ad Hoc Distributed Queries' does not exist, or it may be an advanced option.

The full SQL you need is:

sp_configure 'show advanced options', 1;

RECONFIGURE

GO

sp_configure 'Ad Hoc Distributed Queries', 1;

RECONFIGURE

GO

And you’ll get output that looks like this:

Configuration option 'show advanced options' changed from 0 to 1. Run the RECONFIGURE statement to install.

Configuration option 'Ad Hoc Distributed Queries' changed from 0 to 1. Run the RECONFIGURE statement to install.

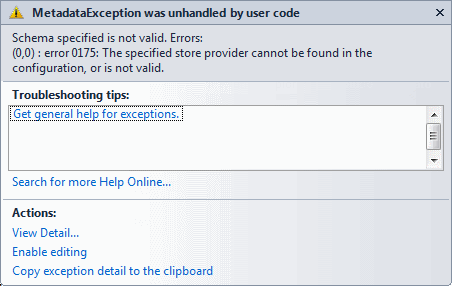

Now, we can try running the SELECT statement again, but this time the following error appears:

Msg 7302, Level 16, State 1, Line 2

Cannot create an instance of OLE DB provider "Microsoft.ACE.OLEDB.12.0" for linked server "(null)".

ODBC Configuration

This is because the ODBC driver is not configured correctly, go to the Control Panel –> System and Security –> Administrative Tools –> Data Sources (ODBC), alternatively you can just type “ODBC” in the Windows start bar.

Either way, you get to this dialog:

And as you can see the ODBC Driver needed for reading Excel files is not installed. A pretty big configuration failure. But it is easy enough to get the correct drivers. You can download them from Microsoft:

However, there is a problem if you have 32bit Office installed and 64bit SQL Server. The 32bit installer for the ODBC Drivers won’t work with 64bit SQL Server, and the 64bit drivers won’t install if it finds an existing 32bit installation of Office on the machine. For my desktop machine that was a problem, but luckily my laptop is running both 64bit versions of Office and SQL Server.

Finally

I eventually found this code snippet that works:

SELECT *

FROM OPENROWSET('MSDASQL',

'DRIVER=Microsoft Excel Driver (*.xls, *.xlsx, *.xlsm, *.xlsb);

DBQ=c:devPerformance-results.xlsx',

'SELECT * FROM [results$]')

The only issue that I have with this is that it uses MSDASQL which is surrounded in uncertainty. In one blog post it was said to be deprecated and 64bit versions won’t be available. Yet, there is a 64-bit version available for download. But for the ad hoc work I’m doing at the moment, it works.